What You're Actually Talking To When You Use ChatGPT

You type a question into ChatGPT and it gives you an answer that sounds confident, specific, and completely wrong. Here's what's actually happening under the hood — and what it means for how you use AI in your business.

You type a question into ChatGPT. It gives you an answer that sounds confident, specific, and completely wrong. Maybe it invented a law firm that doesn't exist. Maybe it cited a study that was never published. Maybe it told you the wrong year for something you could verify with a five-second Google search.

What just happened?

You didn't talk to a genius who made a mistake. You didn't talk to a search engine that returned a bad result. You talked to something that most people fundamentally misunderstand — and that misunderstanding is where the trouble starts.

A Very Sophisticated Autocomplete

Here's the simplest honest explanation of what ChatGPT is.

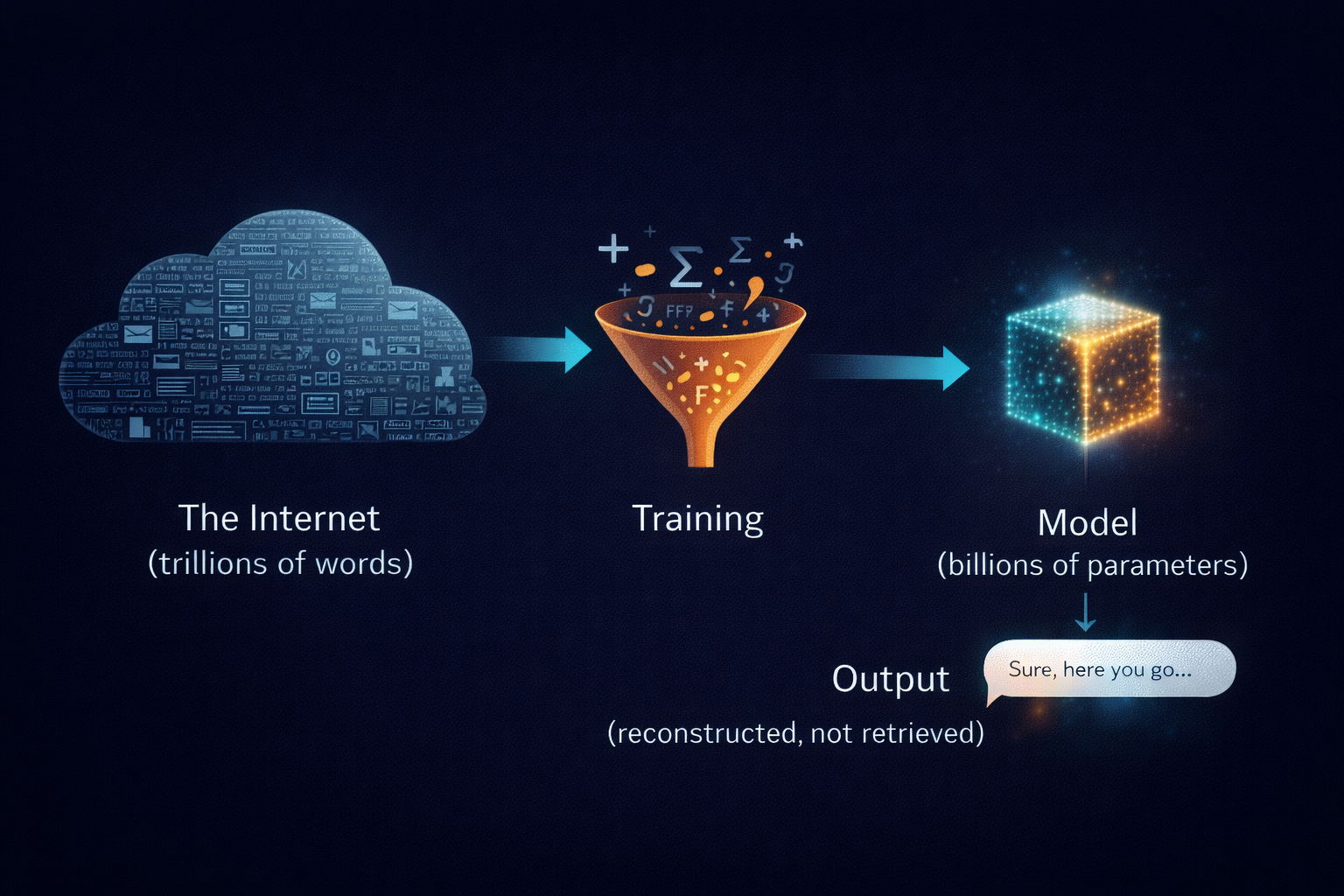

Imagine you took the entire internet — every Wikipedia article, every Reddit thread, every news story, every forum post, every blog, every textbook that's been digitized — and you compressed it. Not into a nice organized database you could search through. Into a statistical pattern. A massive web of probabilities about which words tend to follow which other words, in which contexts, on which topics.

Andrej Karpathy, who helped build some of the AI systems at OpenAI and Tesla, uses an analogy that cuts through the noise. He describes these models as a "lossy zip file" of the internet. You know how when you compress a photo too aggressively it gets blurry? You can still see the shapes. You can recognize the face. But the fine details are gone — a smear where the texture used to be.

That's what happened to the internet inside ChatGPT. The knowledge is in there, but it's compressed into billions of numerical weights. Some things came through clearly. Some things came through blurry. And some things — the obscure facts, the niche details, the things that only appeared a handful of times in the training data — came through as vague impressions rather than accurate records.

When you ask ChatGPT a question, it's not looking something up. It's not searching a database. It's reconstructing an answer from those compressed patterns, one word at a time. Each word is a statistical guess about what word should come next, given everything that came before it.

That's it. That's what you're talking to. A very sophisticated autocomplete that compressed the internet and is now reconstructing it from memory — blurry spots and all.

Why It Sounds So Confident About Wrong Things

This is the part that trips up almost everyone.

When ChatGPT gives you a wrong answer, it doesn't hedge. It doesn't say "I'm not sure about this." It delivers the wrong information in the same calm, authoritative tone it uses for everything else. And that's not a bug in the software. It's a direct consequence of how the system was built.

Think about it from the model's perspective. During training, it absorbed millions of question-and-answer exchanges. In those exchanges, the person answering the question always sounded confident. Nobody in the training data wrote "Who is Abraham Lincoln?" and then responded with "Uh, I think maybe he was a president? Not sure." The answers were always stated with authority.

So the model learned the style of confident answers. It learned it so well that it applies that style to everything — including the things it doesn't actually know.

Karpathy demonstrates this with a simple test. He asks a model "Who is Orson Kovats?" — a name he made up. The model doesn't say "I don't know." Instead it confidently tells him Orson Kovats is an American science fiction author. Ask again and Orson Kovats becomes a fictional TV character from the 1950s. Ask a third time and he's a former minor league baseball player.

The model isn't lying. It doesn't have the concept of lying. It's doing the only thing it knows how to do — producing the next word that's statistically most likely given the pattern. And the pattern says: when someone asks "Who is [name]?", you answer with a confident biography. So it generates one. Every time. Whether the person is real or not.

This is what people call hallucination. And the name is actually perfect, because that's exactly what's happening. The model is generating something that looks and feels like a real memory but was never actually there. It's filling in the blurry parts of that compressed zip file with plausible-sounding details.

Newer models have gotten better at this. They've been trained with examples where the correct answer is "I don't know," so they can sometimes catch themselves. But the underlying tendency is still there. It's built into the architecture. These systems must produce the next word. They don't have a "skip" button.

The Swiss Cheese Problem

Here's what makes this especially tricky for anyone trying to use AI in their work.

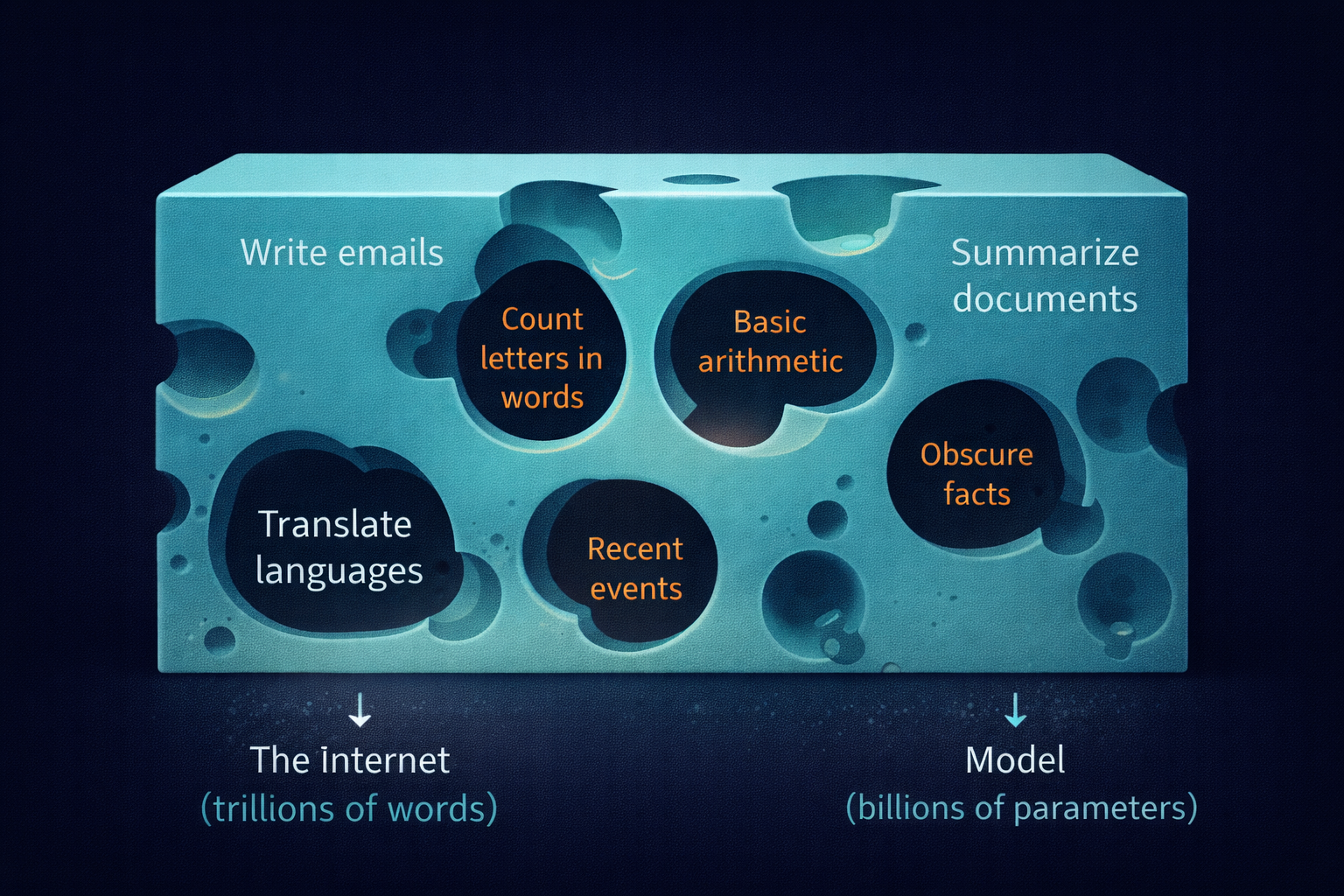

The capabilities aren't evenly distributed.

ChatGPT can write a perfectly structured legal memo and then botch basic arithmetic. It can explain quantum mechanics in terms a ten-year-old would understand and then tell you that 9.11 is bigger than 9.9. It can generate working code for a complex application and then fail to count the number of letters in a word.

Karpathy calls this the "Swiss cheese" model of AI capabilities. Imagine a block of Swiss cheese. Most of it is solid. You can lean on it, build on it, trust it. But there are holes scattered throughout — and you can't always see them from the surface.

The solid parts are impressive. When the model is operating in an area where the training data was abundant and consistent — common facts, well-documented topics, standard writing formats — it performs remarkably well. Sometimes better than you'd expect.

But the holes are real. And they're unpredictable. The model might nail every question about American history and then confidently tell you the wrong population of your city. It might write flawless Python code for ten tasks and then produce something completely broken on the eleventh. There's no warning label on the holes. The model doesn't change its tone when it's about to step into one.

This is why the people who get the most out of AI tools aren't the ones who trust them the most. They're the ones who understand where the holes are likely to be — and check the work in those areas.

What This Means for Your Business

So you've got a tool that compressed the internet into a blurry pattern, generates answers one word at a time by statistical guessing, sounds confident whether it's right or wrong, and has unpredictable gaps in its knowledge.

That sounds like a terrible tool. But it's actually an incredibly useful one — if you use it correctly.

The key is matching the tool to the task. Here's how to think about it.

Where AI works well

Drafts, not finals. ChatGPT is excellent at producing a first draft of almost anything — emails, proposals, product descriptions, social media posts, reports. The draft gives you a starting point that's 70-80% of the way there. You edit, adjust the tone, fix the details, and you've saved real time. Understanding that the context you provide matters more than which model you use is the first step toward getting better outputs.

Tasks where you can check the output. If you know enough about the topic to spot errors, AI becomes a powerful assistant. A contractor using it to draft a project estimate can easily catch if the numbers are off. A restaurant owner using it to write menu descriptions can tell if something sounds wrong. The expert plus the tool is the winning combination.

Brainstorming and exploration. The model compressed a broad range of human knowledge, which makes it genuinely good at generating ideas and suggesting angles you hadn't considered. You wouldn't trust a brainstorming session to be 100% accurate — and you shouldn't trust AI brainstorming to be either. But as a thinking partner, it's useful.

Repetitive formatting and transformation. Taking data from one format and putting it into another. Summarizing long documents. Reformatting messy notes into clean templates. Pattern-matching tasks where hallucination risk is low.

Where AI falls short

Anything where a wrong answer is expensive. Legal advice. Medical recommendations. Financial calculations. Tax filings. If an error could cost you money, reputation, or safety, you need a human checking every output — and you need to treat the AI's contribution as a rough draft, not a finished product. We've written about the real costs that come with "free" AI tools that people tend to overlook.

Niche or recent information. Remember the blurry zip file. The things that appeared thousands of times in the training data — major historical events, popular science, widely-known facts — came through clearly. The things that appeared rarely — your specific industry regulations, local zoning codes, a company founded last year — came through blurry or not at all. If you're asking about something obscure, you're walking on Swiss cheese.

Tasks that require judgment, not knowledge. Should you fire this vendor? Is this partnership worth pursuing? AI can organize the information you need to make those calls, but the decision itself requires human judgment. The model doesn't understand your business. It understands patterns in text.

Working with your own data — if it's messy. AI tools are only as good as what you feed them. If your business data is scattered across spreadsheets, email threads, and sticky notes, the AI can't magically organize what you haven't organized yourself.

The One Rule That Matters

If you take one thing from this article, let it be this: treat ChatGPT like a confident new employee who has read a lot but experienced nothing.

They'll give you impressive-sounding answers. They'll produce work quickly. They'll never tell you they don't know something — they'll just guess and hope you don't notice. They're genuinely helpful when you give them clear tasks and check their work. They're genuinely dangerous when you hand them something important and walk away.

The businesses that are getting real value from AI right now aren't the ones using it as a replacement for thinking. They're the ones using it as a tool that amplifies the thinking they're already doing. They draft with it, check the output, and move faster than they could alone.

That's what you're actually talking to. Not a genius. Not a search engine. A very fast, very well-read, very confident guesser that works best when you stay in the room.

Want Help Figuring Out Where AI Actually Fits?

The difference between AI that saves you time and AI that creates problems is knowing which tasks to hand it and which to keep. We help businesses figure that out — no hype, no buzzwords, just an honest look at what works for your specific operation.

Not every tool is right for every job. The smart move is knowing which is which.

Keep reading

More from the field.

Stay Connected

Get practical insights on using AI and automation to grow your business. No fluff.