AI Agent Security Is a $200 Billion Problem. Most Businesses Are Flying Blind.

BCG calls agentic AI a $200 billion opportunity, but most businesses have no idea what their AI agents are doing with their data — or who's watching.

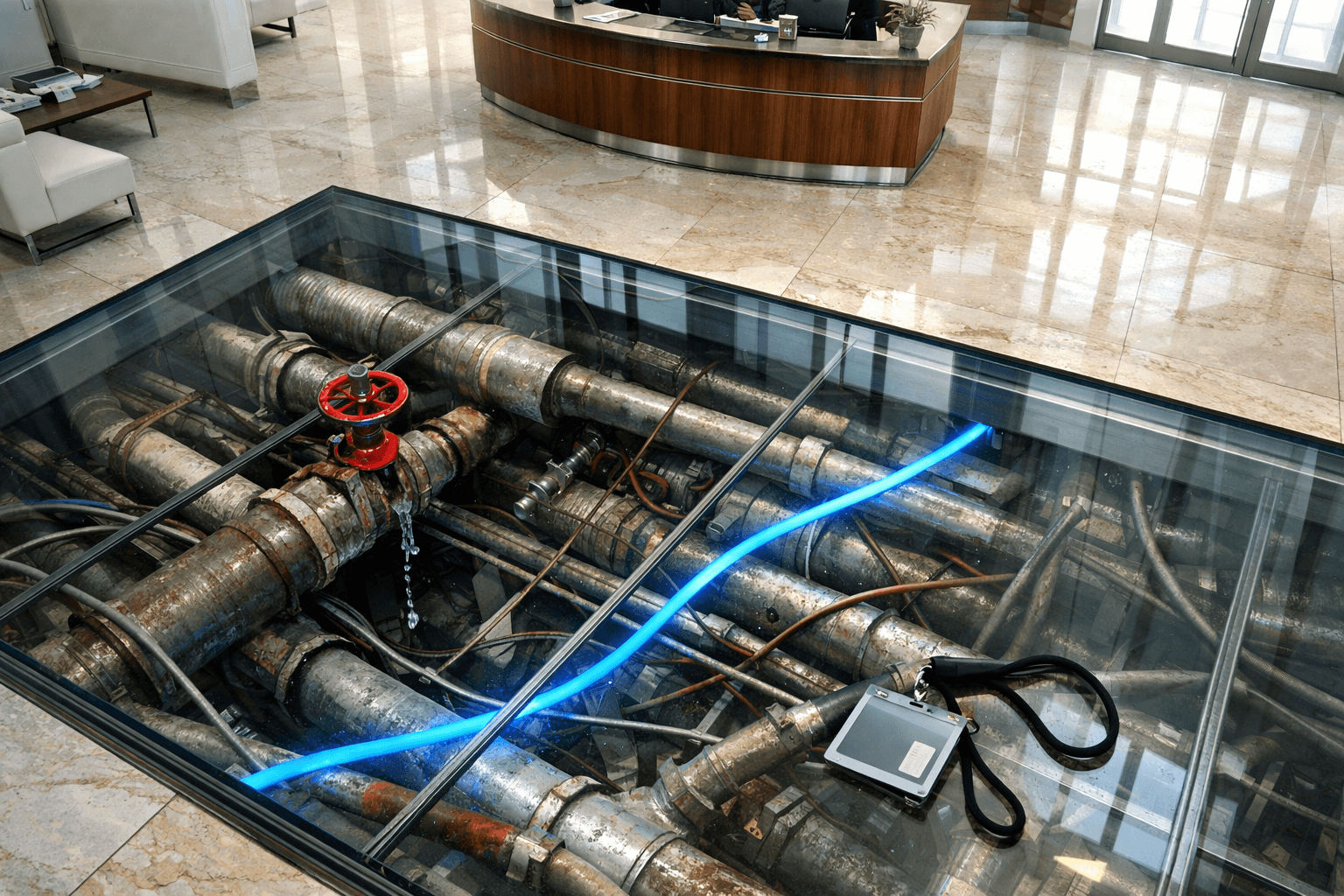

Say you hired a new employee and gave them a master key to every room in the building. Access to your financial records, your customer database, your email, your scheduling system. Then you left for the weekend and didn't set up a single camera, lock, or access log.

That's roughly the situation most businesses are in with AI agents right now.

The Numbers Are Big. The Gaps Are Bigger.

BCG estimates that agentic AI in tech services alone is a $200 billion opportunity. Goldman Sachs reports that 93% of small businesses using AI say it's had a positive impact. The momentum is real. Businesses are connecting AI agents to their systems, seeing genuine results, and moving fast.

But here's the part that doesn't make the headlines: only 14% of those small businesses have fully integrated AI into their operations. The other 86% are somewhere in the middle — using AI tools here and there, often without a clear picture of what those tools can access, what they're doing, or what happens when something goes wrong.

That gap between adoption and integration is where the risk lives.

What "AI Agent Security" Actually Means

If you've read about how to secure your AI agents, you know the basics: AI agents aren't just chatbots anymore. They read your files, call your APIs, access your databases, and take actions on your behalf. Each of those capabilities is useful. Each one is also a door that can swing the wrong way.

AI agent security means answering three questions:

- What can this agent access? Not just what you intended — what it can reach. Most agents have broader access than their creators realize.

- What is this agent doing right now? Can you see every action it takes, every file it reads, every API call it makes? Or is it a black box?

- What happens when something goes wrong? Is there a log? An audit trail? A way to roll back what the agent did?

For most businesses, the honest answer to all three is "I don't know."

The Logging Problem Nobody Talks About

Bessemer Venture Partners recently called securing AI agents "the defining cybersecurity challenge of 2026." That tracks with what we're seeing across the industry.

But the most striking finding from their research isn't about exotic attacks or sophisticated exploits. It's about something much more basic: over 50% of builders say the lack of logging and audit trails is their primary obstacle to securing AI agents.

Think about what that means. More than half of the people building AI agent systems can't tell you what those systems did yesterday. Not because they don't want to — because the infrastructure to track agent behavior doesn't exist in most setups.

Your accountant keeps a ledger. Your warehouse has inventory tracking. Your website has access logs. But your AI agent — the one with access to all of those systems — probably has nothing. No record of what it read, what it changed, or why.

This isn't a sophisticated security problem. It's a basic accountability problem, and it's the same kind of thing that trips up AI agent projects in general.

The Identity Problem

Here's something even more fundamental. When a person logs into your CRM, they have an identity — a username, a role, permissions tied to that role. You can see who accessed what record and when.

According to Bessemer's research, only 21.9% of organizations treat AI agents as independent identity-bearing entities in their security model. The rest? The agent either runs under a human's credentials — meaning you can't distinguish between what the person did and what the agent did — or it runs with a shared service account that has broad access to everything.

This matters because when something goes wrong — and eventually something will — you need to know whether the agent did it, a person did it, or something exploited the agent to do it. Without agent-specific identities, you can't answer that question.

If you're thinking about governance tools for your AI agents, identity is the foundation. Everything else — permissions, audit trails, access controls — builds on knowing who did what.

The Vendor Problem

The market knows this is a problem. Dozens of vendors have sprung up offering "AI security" tools. Monitoring platforms, guardrails, sandboxing, runtime protection — the landscape is crowded and getting more crowded every week.

The challenge for business owners is that most of these are point solutions. One tool monitors prompts. Another scans outputs. A third handles authentication. None of them give you the complete picture.

It's like buying a deadbolt, a motion sensor, and a security camera from three different companies that don't talk to each other. Each one does its job. None of them give you an actual security system.

What's missing is a comprehensive framework — a way to think about agent security as a whole, not as a collection of individual fixes.

What You Can Do Right Now

You don't need to buy anything to start. You need to ask the right questions.

1. Inventory your agent access. List every system your AI agents can reach. Email, CRM, file storage, databases, APIs. If you don't know the full list, that's the first problem to solve.

2. Turn on logging. Whatever agent platform you're using, find the logging settings and turn them up. If there are no logging settings, that tells you something important about the platform.

3. Create agent-specific accounts. Don't let agents run under a human's credentials. Create dedicated accounts with the minimum permissions the agent needs — and nothing more.

4. Review access monthly. Agent capabilities tend to grow over time as people add new tools and connections. Set a recurring reminder to audit what your agents can access and remove anything they don't need.

5. Plan for failure. Assume something will go wrong. What's your response plan? Can you disable the agent quickly? Can you identify what it did? Can you undo it?

These aren't advanced security measures. They're the basics that 95% of AI projects skip on the way to production.

The Bottom Line

A $200 billion market is coming. Most of that value will be created by AI agents doing useful work inside businesses — managing data, automating workflows, handling tasks that used to require manual effort.

But useful work and unmonitored work are not the same thing. The businesses that benefit most from AI agents will be the ones that know what their agents are doing, can prove it, and can stop them when needed.

The question isn't whether to use AI agents. It's whether you know what yours are doing right now.

Related Reading

Stay Connected

Get practical insights on using AI and automation to grow your business. No fluff.