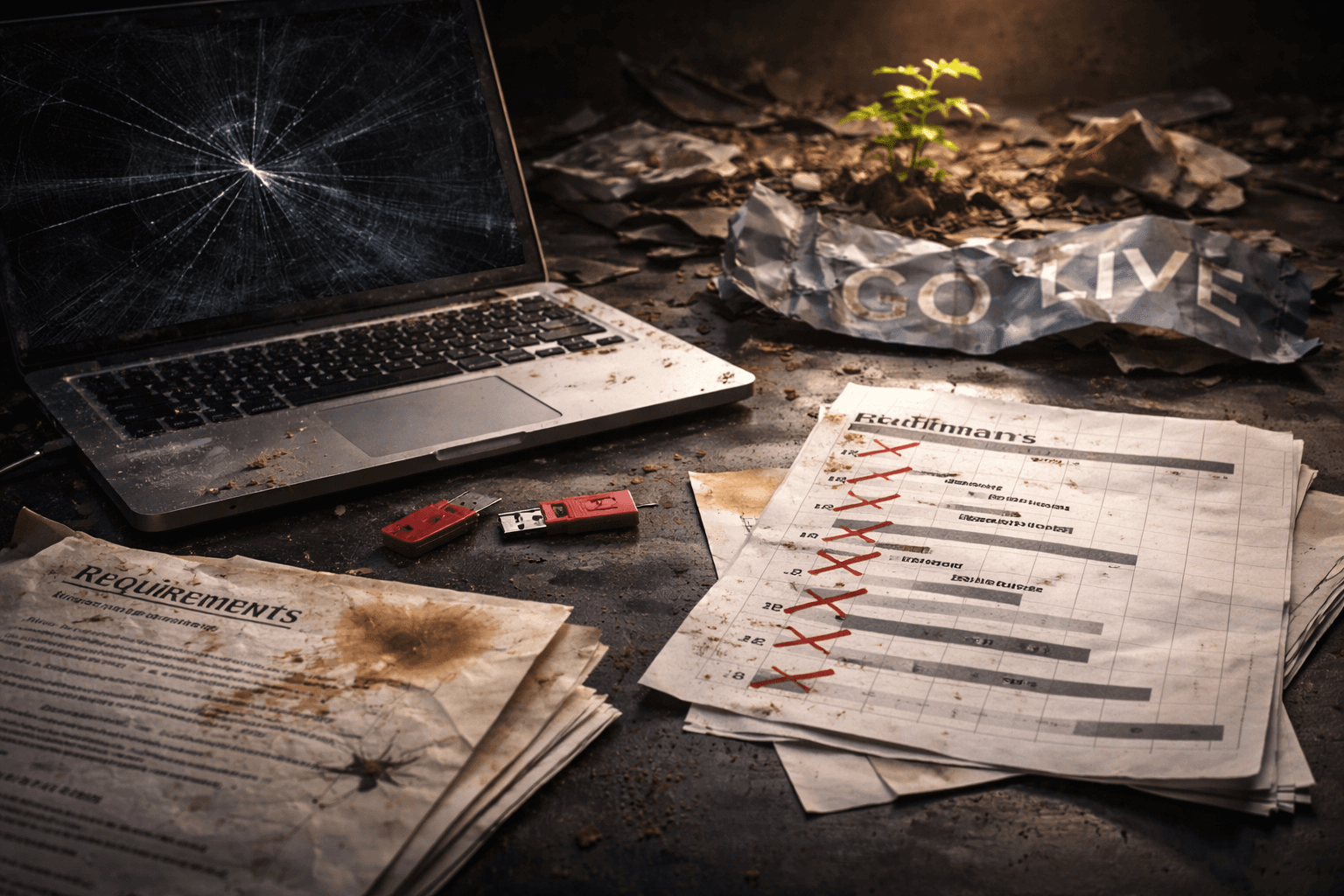

Why Most AI Implementations Fail (And How to Be the Exception)

73% of AI projects never make it to production. Here are the five reasons they fail and the specific fixes that separate successful implementations from expensive disappointments.

73% of AI projects never make it to production. That number comes from multiple industry reports over the last two years, and if anything, it understates the problem for small and mid-sized businesses.

That is not a technology problem. ChatGPT works. Automation platforms work. The AI tools available today are genuinely capable of doing useful things for real businesses. The problem is almost always in how the project gets planned, scoped, and executed.

Plenty of businesses spend $50,000 on AI initiatives that produce nothing. Others spend $200 a month and get results in a week. The difference is not budget. It is not the tools. It is five specific mistakes that the failures make and the successes avoid.

Reason 1: They Started With Technology Instead of a Problem

This is the most common and most expensive mistake. It goes like this:

A business owner reads an article about AI (possibly even one of ours). They get excited. They call a vendor or a consultant and say, "We want to implement AI." The vendor says great, here is our platform. They spend months integrating it. And at the end, nobody is quite sure what problem it solves.

Consider a regional insurance agency in this scenario. The operations director sees a demo at a conference of an AI-powered document processing system that reads insurance claims, extracts key data, and routes them to the right department. Looks like it would save hours.

Except the agency's actual bottleneck isn't document processing. Their staff spends most of their time on the phone explaining policy details to confused customers. The document processing takes 20 minutes a day. The phone calls take four hours.

They'd spend $35,000 on a system that saves 20 minutes a day.

The fix: Start with the problem, not the technology. Before you talk to a single vendor, answer one question: what specific task costs your team the most time, causes the most errors, or creates the most frustration? AI works best for small businesses when it targets a specific, measurable pain point. Start there. If the right answer turns out to be AI, great. If it turns out to be a $50-a-month automation tool or just a better spreadsheet, that is great too.

Reason 2: They Did Not Involve the People Who Would Actually Use It

Technology decisions made in conference rooms tend to fail in the field.

Imagine a mid-size accounting firm that decides to automate its client onboarding process. The partners select the platform, the IT team configures it, and they roll it out on a Monday morning with a training video and a one-page instruction sheet.

The staff hated it. Not because the system was bad — it was actually well designed. But nobody asked the staff what their actual workflow looked like. The automation assumed clients submitted documents in a specific order. In reality, clients submit documents whenever they feel like it, in whatever format they have, sometimes by email, sometimes by fax (yes, still), and sometimes by handing a paper folder to the receptionist.

The system could not handle the mess. The staff went back to doing it manually within two weeks. $15,000 and three months of configuration, wasted.

The fix: Before you build anything, sit with the people who will use it. Watch them work for a day. Ask what they hate about the current process. Ask what workarounds they have invented. The best automation projects we have seen — including the kind we described in our customer onboarding automation guide — start with the operations people drawing the real workflow on a whiteboard, including all the messy exceptions.

The people who do the work every day know things the decision-makers do not. If you skip their input, you are building for a process that does not exist.

Reason 3: They Underestimated Data Quality

AI runs on data. If your data is bad, your AI is bad. This sounds obvious, but it trips up more projects than any technical challenge.

Suppose a home services company wants to use AI to predict which customers are likely to need follow-up service based on their maintenance history. Smart idea. Except their "maintenance history" is scattered across three different systems — a CRM, a scheduling tool, and a spreadsheet one dispatcher maintains on her personal computer. Customer names are spelled differently across systems. Half the service records have no dates. Equipment model numbers are entered freehand with no standard format.

The AI project could not even start until they spent four months cleaning their data. By the time the data was ready, the champion who pushed for the project had moved on and nobody else cared enough to finish it.

The fix: Before you commit to an AI project, audit your data. Not a formal audit — just look at what you have. Is it in one place or scattered across five systems? Is it consistent? Is it complete? If the answer is "we would need to clean this up first," then cleaning the data is your first project, not the AI implementation.

Honestly, cleaning up your data is usually valuable on its own. You do not even need AI to benefit from having consistent, centralized records. And if you are curious about what AI integration actually involves, data preparation is always step one.

Reason 4: No Success Metrics Defined Upfront

"We want to use AI to improve customer service."

Improve it how? By what measure? Compared to what? By when?

Without specific success metrics, AI projects drift. They expand. They become permanent R&D initiatives that never produce concrete results. And when someone finally asks "is this working?", nobody can answer because nobody defined what "working" meant.

Consider a retail chain that implements an AI chatbot for customer support with the goal of "reducing support tickets." Six months later, support tickets have dropped by 8%. The team is divided — is that success? The chatbot cost $40,000 in setup and $2,000 a month to run. An 8% reduction in tickets saves maybe one support rep a few hours a week. The math doesn't work, but nobody ran the math beforehand because nobody defined what "reducing support tickets" actually needed to mean for the project to be worthwhile.

We wrote about measuring AI agents with real business metrics precisely because of this problem. If you cannot put a number on what success looks like before you start, you will not know whether you got there.

The fix: Before starting any AI project, write down three things: what metric you are trying to improve, what the current baseline is, and what number would justify the investment. "Reduce average response time to new inquiries from 4 hours to under 30 minutes." "Cut the weekly reporting process from 5 hours to 30 minutes." "Increase change order approval rate from 60% to 80%." If you hit the number, the project succeeded. If you do not, you know to adjust or stop.

Reason 5: They Treated It as a Project Instead of a Process

This is the subtlest mistake and the one that kills more AI initiatives in the long run than any other.

A successful AI implementation is not something you finish. It is something you maintain.

Imagine a marketing agency that builds an AI-powered content workflow. It drafts social media posts, suggests email subject lines, and generates first drafts of blog content. For the first two months, it's great. The team saves ten hours a week. Everyone's happy.

Then the AI started producing content that felt stale. It kept using the same phrases, the same structures, the same examples. The team had not updated the prompts, had not provided new examples of their evolving brand voice, had not adjusted the workflow as their content strategy shifted. The AI was doing exactly what they trained it to do — three months ago. The business had moved on. The AI had not.

By month four, the team was rewriting everything the AI produced, spending nearly as much time as they had before the automation. The project was quietly abandoned.

The fix: Treat AI implementations like you treat employee onboarding. You do not hire someone, train them once, and then never give them feedback. You check in. You adjust. You give them updated information. AI tools need the same attention — not constant, but regular.

Schedule a monthly review of any AI workflow. Is it still doing what you need? Are the outputs still good? Has anything changed in your business that the AI does not know about yet? This is the difference between businesses that get lasting value from AI and businesses that get a two-month honeymoon followed by quiet disappointment. Keeping AI agent costs under control is part of this ongoing maintenance.

The Pattern That Works

Every successful AI implementation we have seen follows the same pattern. It is not glamorous. It is not fast. But it works.

Start small. Pick one process. One problem. One workflow. Not the most strategic initiative in the company. The most annoying repeatable task. We have a whole guide on building your first workflow if you want the step-by-step version.

Prove value fast. Get something working within days or weeks, not months. If your team cannot see a tangible improvement within two weeks of starting, something is wrong with the scope. Either the problem was too big or the solution was too complex.

Get buy-in from the people who use it. Not from the boardroom. From the desk where the work happens. If the person doing the task every day says "this is better," you have a win. If they are working around the automation, you have a problem.

Measure before and after. How long did this take before? How long does it take now? How many errors before? How many now? Numbers do not lie, and they make the case for expanding better than any pitch deck.

Then expand. Once the first workflow is working and proven, build the next one. Then the next. Each one builds on the confidence and infrastructure of the last. Within six months, you have a system — not a science project.

The Honest Truth

AI is genuinely useful for business. We say that as people who work with it every day and have seen it save real time and real money for real companies. The tools available in 2026 are better, cheaper, and easier to use than anything that has existed before. If your business has repetitive processes and you are not exploring automation, you are leaving value on the table.

But AI is not magic. It does not fix broken processes — it automates them, broken parts and all. It does not replace good judgment — it amplifies whatever judgment you point it at. And it does not succeed on its own — it succeeds when someone takes the time to aim it at the right problem, measure whether it is working, and adjust when it is not.

The 27% of AI projects that make it to production and deliver real value are not luckier. They are not using better tools. They are just doing the boring parts right — scoping carefully, involving the right people, cleaning the data, defining success, and treating it as an ongoing process instead of a one-time project.

That is not a technology insight. It is a management insight. And it is the reason most AI implementations fail.

Blue Octopus Technology helps businesses implement AI and automation that actually works — scoped to real problems, measured with real metrics, and built to last. If you have tried AI before and it did not stick, or if you are starting from scratch and want to get it right the first time, let's talk.

Keep reading

More from the field.

AI Agents on a Budget: A Cost Optimization Guide for Small Businesses

Read AI Strategy & Business DecisionsYour Business Needs an Intelligence System, Not More Bookmarks

Read AI Architecture & MethodologyThe AI Operating System: How to Layer Intelligence Around Your Business

ReadStay Connected

Get practical insights on using AI and automation to grow your business. No fluff.