How We Built an AI Research Pipeline That Processes 20 Links in 10 Minutes

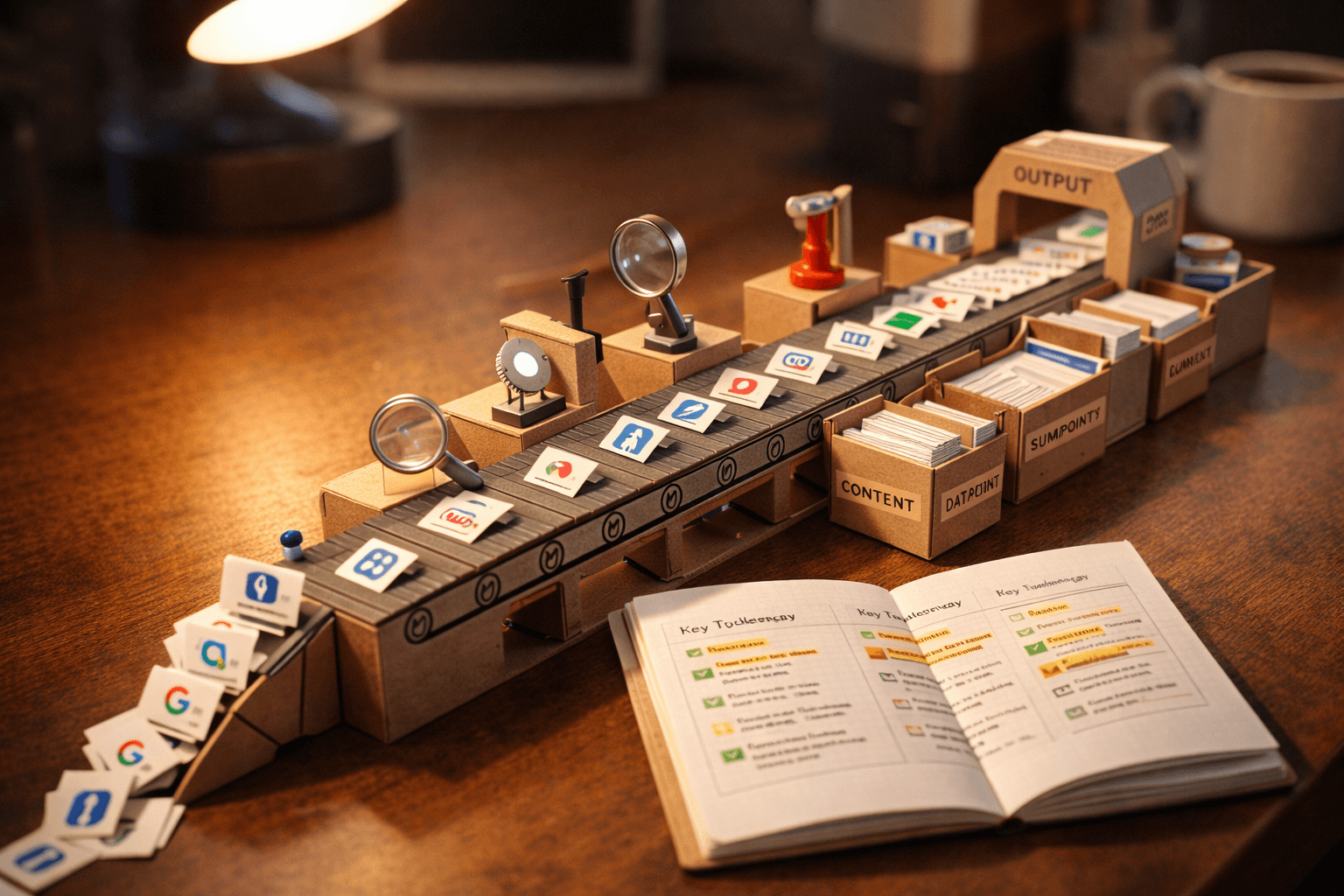

We built an AI research pipeline that turns raw links into organized business intelligence. 168 links processed, 62 deep dives, 276 implementation ideas extracted. Here's exactly how it works.

Every day, we process 15-20 links from Twitter, articles, GitHub repos, and videos. Not by reading them all manually. We built an AI research pipeline that turns raw links into organized business intelligence in about 10 minutes.

One command. Paste a link. Walk away.

When it finishes, the link has been fetched, read, analyzed through five business lenses, filed into the right knowledge base documents, cross-referenced against everything we already know, and logged with a timestamp we can search later. Every actionable idea gets extracted and tracked. Every person worth following gets added to a watchlist. Every trend gets connected to our existing signals.

168 links processed so far. 62 deep dives. 90+ organized bookmarks. 35+ people tracked. 276 implementation ideas extracted. 16 strategic signals maintained. All of it searchable, all of it connected, all of it in plain markdown files we can read anytime.

This post is about how that system works, why we built it, and what it means for businesses that are drowning in information but starving for insight.

The Problem: Bookmarks Are a Graveyard

We used to bookmark things. Everyone does. You see an interesting article, you save it. A useful tool, you bookmark it. A smart thread on Twitter, you hit the little flag icon.

Then you never look at any of it again.

This isn't a discipline problem. It's a systems problem. Bookmarks are filing, not thinking. They capture the existence of information without capturing what it means. By the time you go back to review a bookmark — if you ever do — the context is gone. You can't remember why it mattered. You definitely can't connect it to the three other bookmarks that said something similar.

We were sitting on hundreds of saved links across multiple platforms. We'd see the same trend mentioned by five different people in the same week and not even realize it was a pattern because the links were scattered across browser bookmarks, Twitter saves, and a notes app.

The question wasn't "how do we save more?" It was "how do we actually USE what we find?"

What We Built

The pipeline is a single command — /research — followed by a URL. That's it. From there, AI handles everything through an 8-step workflow. Here's each step in plain language, with real examples from our actual system.

Step 1: Log It

The link gets a timestamp and enters an intake log. Format: date, time, status, title, URL. Every link ever processed, newest first.

This sounds trivial. It isn't. The intake log is the source of truth for "what was the last thing we processed?" and "have we seen this before?" When you're handling 15-20 links a day, losing track of one means losing the insight that came with it.

Our log has 168 entries dating back to January 27. Every one is timestamped. Every one has a status: pending, processed, or actioned. Nothing falls through.

Step 2: Fetch and Follow the Thread

AI reads the full content of the link. But it doesn't stop there. If a tweet references an article, it fetches the article. If the article mentions a GitHub repo, it fetches the README. If the repo links to a demo or a related project, it follows that too.

A single link routinely spawns 3-8 additional fetches.

Here's a real example. We processed a single tweet about the "Cursor Directory" — a developer directory that makes $35,000 a month. The tweet linked to an X article that was behind authentication. So the system pulled from 8 different web sources to reconstruct the full story: the origin tweet, reply threads, the open-source repo, revenue screenshots, and corroborating posts from other developers. One link became a complete case study.

For content the system can't access directly — like JavaScript-rendered pages, PDFs, or login-protected articles — it uses workarounds. A syndication API for tweet content. Web searches to find summaries of inaccessible articles. Browser automation for pages that need real rendering. YouTube videos get flagged as "pending transcript" for a human to generate later.

The principle is simple: follow the thread until there's nothing left to follow. One link is never just one link.

Step 3: Analyze Through Five Business Lenses

This is where the system diverges from every bookmark tool, read-later app, and RSS reader in existence.

Every piece of content gets evaluated against five questions:

IMPLEMENT? Should we do this for our own business operations?

OFFER? Should we package this as a service and sell it to clients?

TOOL? Should we use this in our workflow?

MONITOR? Should we track this trend without acting yet?

CONTENT? What blog posts, social posts, or videos could we create from this?

A bookmark says "this is interesting." Our five-lens analysis says "here's exactly what to DO with it."

Not everything scores high on every lens. A new JavaScript framework might be "Monitor" only. A competitor's pricing page might be "Implement" (adjust our pricing) and "Content" (write about the trend). A new AI tool might hit all five: use it ourselves, offer it to clients, write about it, and track where it goes.

The point is rigor. Every link gets the same structured analysis, not just the ones we happen to have energy for on a given afternoon.

Steps 4 and 5: Build and Update the Knowledge Base

Based on the analysis, the system creates or updates real files:

New methodology discovered? A strategy document gets created. We have 23 of these — deep breakdowns of things like self-healing workflows, agent architecture patterns, browser automation landscapes, and security frameworks. Each one is a standalone reference doc with enough detail to actually implement what it describes.

New tool found? A tool evaluation gets created. We have 14 — each one documenting what the tool does, its maturity level, installation instructions, use cases, and security notes. Not a product review. A professional assessment.

New person producing consistently high-value content? They get added to a people-to-watch list, ranked by follow priority. We track 35+ people — not just that they posted something good once, but whether they're a reliable source of signal worth monitoring long-term.

Curated reference? It goes into an organized bookmark collection — 90+ entries, sorted by topic, each with author, date, URL, content summary, tags, and notes.

Intelligence brief update? If the research changes our understanding of a strategic signal, the brief gets updated. The intelligence brief is the "so what?" document — the synthesized view of everything we know, organized into 16 key signals with our assessment of where we stand on each one.

This is the compounding part. Link number 1 created a few bookmarks. Link number 168 gets cross-referenced against 16 signals, 23 strategy docs, 14 tool evaluations, 35 tracked people, and 276 existing ideas. The system gets smarter with every link because it has more context to connect against.

Step 6: Report Back

After processing, the system gives us a structured summary: what it found, what category it falls into, what it connects to, and what to do about it. This is the briefing. We read it, decide if we agree with the assessment, and move on to the next link.

Steps 7 and 8: Mark Complete and Extract Ideas

The intake log status changes from "pending" to "processed." If the research produced actionable ideas — things to build, tools to adopt, services to sell, people to engage with — they go into an implementation backlog. That backlog has 276 items across six categories: BUILD, ADOPT, OFFER, ENGAGE, SECURITY, and CONTENT. Each one has an effort estimate and a status.

The backlog is a registry, not a to-do list. Its job is to make sure nothing falls through the cracks. We decide what to act on. The system makes sure we don't forget what we could act on.

A Real Example: One Tweet Becomes a Content Strategy

In February, we processed a tweet from @rohanpaul_ai sharing a Mark Cuban video clip about AI and small business. Cuban's argument: "Software is dead. Everything's gonna be customized. There are 33 million companies in the US. Who's gonna customize it for them?" 7,500 likes.

That's one link. Here's what happened next.

The system followed the thread. It found @damianplayer's commentary on the same clip (10,000 likes): "Pick one vertical, learn the flows, become the AI team they never hired." Then it found @aakashgupta's analysis (1,100 likes) framing Cuban's thesis as a historical parallel to the IT services boom.

Three independent sources saying the same thing. That's not a bookmark. That's a signal.

The system tagged all three as validating our business thesis (we build custom AI for non-technical businesses — which is exactly what Cuban described). It updated our intelligence brief. It added all three to our curated bookmarks. It flagged a content opportunity.

That one tweet, followed through its connections, became a full blog post — "Software Is Dead. Custom AI Is What Comes Next." — backed by five independent sources including a plumber who canceled a $40,000 software contract because AI tools did the job.

One link. Three related discoveries. One signal update. One blog post. One validated business thesis.

That's the difference between "I bookmarked a Mark Cuban tweet" and "I identified a market signal from converging independent sources and turned it into a content asset."

What the Knowledge Base Actually Looks Like

People imagine "knowledge base" means a database with an admin panel and a query engine.

Ours is markdown files in a git repository. Plain text. No database. No API. Any human can open any file and read it.

The inventory: an intake log (168 timestamped entries), an intelligence brief (16 strategic signals with our position on each), 23 strategy documents (deep enough to implement from), 14 tool evaluations (with security notes and maturity ratings), a people-to-watch list (35+ individuals ranked by signal quality), 90+ organized bookmarks (each with full context and tags), 276 implementation ideas (categorized by type with effort estimates), and a content pipeline tracking every blog post from idea to published.

Every file is version-controlled through git. Every change is tracked. We can see exactly what was added, when, and from which research session. The system has its own institutional memory.

Why This Isn't Just a Better Bookmark Tool

The difference between bookmarking and what this system does comes down to three things.

Every link gets the same rigor. When you read things manually, you give your best attention to the first few items and skim the rest. It's human nature. Our system gives the same five-lens analysis to link number 168 that it gave to link number 1. The quality doesn't degrade with volume.

Patterns emerge automatically. When five different people on Twitter are saying the same thing in the same week, and those five posts get processed through the same analysis framework and cross-referenced against the same 16 signals, the pattern is obvious. In a bookmark folder, those five posts are just five separate items that look unrelated.

Nothing falls through the cracks. Every actionable idea — every tool to evaluate, every service to consider, every person to follow, every content opportunity — gets logged and tracked. We decide what to pursue and what to ignore. But the system guarantees that the decision is conscious, not accidental.

Here's a concrete example. In one processing session, we handled 26 links in a single sitting. Among them: a Google Maps scraper for extracting business data (TOOL + OFFER), a Chrome standard for agent-ready websites (MONITOR + CONTENT), an X automation crackdown warning (IMPLEMENT — verify our posting tool is safe), and a lead generation case study where someone used AI to process 12,105 real estate listings in 90 minutes (OFFER — directly sellable service).

Any one of those could have been a bookmark we saved and forgot. Instead, the scraper got a tool evaluation. The Chrome standard got a strategy document. The automation warning got flagged as a risk to our social media approach. The lead generation case study became a blog post.

Twenty-six links. Zero lost insights.

The Honest Take

We're not going to pretend this system materialized overnight or that it runs itself.

This took months to build and refine. The first version was rough. The analysis was shallow. The file structure didn't scale. We've rebuilt the pipeline multiple times. The system you'd see today is version four or five, depending on how you count.

It only works because we use it daily. The system IS the habit. If we stopped feeding it links, the intelligence brief would go stale, the signals would drift, and the whole thing would become another abandoned project. Consistency is non-negotiable.

AI does the heavy lifting, but a human still decides what matters. The system analyzes. It categorizes. It cross-references. It spots patterns. But it doesn't decide what to act on. Every recommendation still gets a human review. Some of the 276 implementation ideas will never get built. That's fine. The system is designed to capture everything so we can choose wisely — not to choose for us.

Not every link deserves deep analysis. Some are quick bookmarks. Some are "interesting but not relevant right now." The system handles both. A quick process might touch 3 files. A deep dive touches 8-10. The depth is proportional to the signal quality.

The file-based approach has tradeoffs. There's no query engine. No fuzzy search. No relational joins. To find something, you search through files. For the scale we're at — hundreds of entries, not millions — this is fine. If we hit 10,000 entries, we'd need to rethink. We haven't hit that wall yet, and the simplicity of markdown files has been worth more than the sophistication of a database.

What This Means for Your Business

You don't need our exact system. But you need SOME system for turning information into decisions.

Consider your current workflow. You read industry news, you follow competitors, you see new tools announced, you get recommendations from colleagues. Where does all of that go? If the answer is "browser bookmarks" or "a notes app" or "my memory," you're leaking insight constantly.

The gap between businesses that use AI for research and those that don't is going to widen fast. Not because AI is magic — because it's consistent. It reads every article with the same attention. It never has a low-energy afternoon where it skims instead of reading. It never forgets that a tool it saw three weeks ago is relevant to a problem that showed up today.

The competitive advantage isn't access to information. Everyone has access to the same articles, the same tweets, the same GitHub repos. The competitive advantage is structured processing of that information — turning the same raw material everyone else is ignoring into organized intelligence you can act on.

You could start small. Pick one topic your business needs to track — competitor activity, industry regulations, new tools in your space. Build a simple system: log what you find, analyze what it means, track what to do about it. Even a spreadsheet with "Link," "What it means," and "Action item" columns is better than a bookmark folder.

Or you could start with AI. Tools exist today that can read a webpage, summarize it, and extract key points. The gap between that and what we built is just structure and consistency — doing it the same way every time, connecting each new piece to everything you already know.

The businesses that will win the next five years aren't the ones with the best products or the lowest prices. They're the ones that see the signals first and act on them fastest. That requires a system, not just good intentions.

We Built This for Ourselves. Now We're Building It for Clients.

Blue Octopus Technology started the intelligence hub as an internal tool. We needed to track AI trends, evaluate tools, and make better decisions about where to invest our time. It grew into something bigger — a system that processes raw information into structured business intelligence at a pace that would be impossible manually.

We're now building versions of this for clients. Not copies of our exact system — customized pipelines tuned to specific industries, specific competitors, specific questions. A real estate firm tracking market signals. A law practice monitoring regulatory changes. A SaaS company watching competitor feature releases.

The underlying principle is the same: stop hoarding bookmarks, start processing intelligence.

If you want to stop drowning in information and start making faster decisions, let's talk about what a research pipeline could look like for your business.

Blue Octopus Technology helps businesses work smarter with AI — without the complexity. See what we build.

Keep reading

More from the field.

Stay Connected

Get practical insights on using AI and automation to grow your business. No fluff.