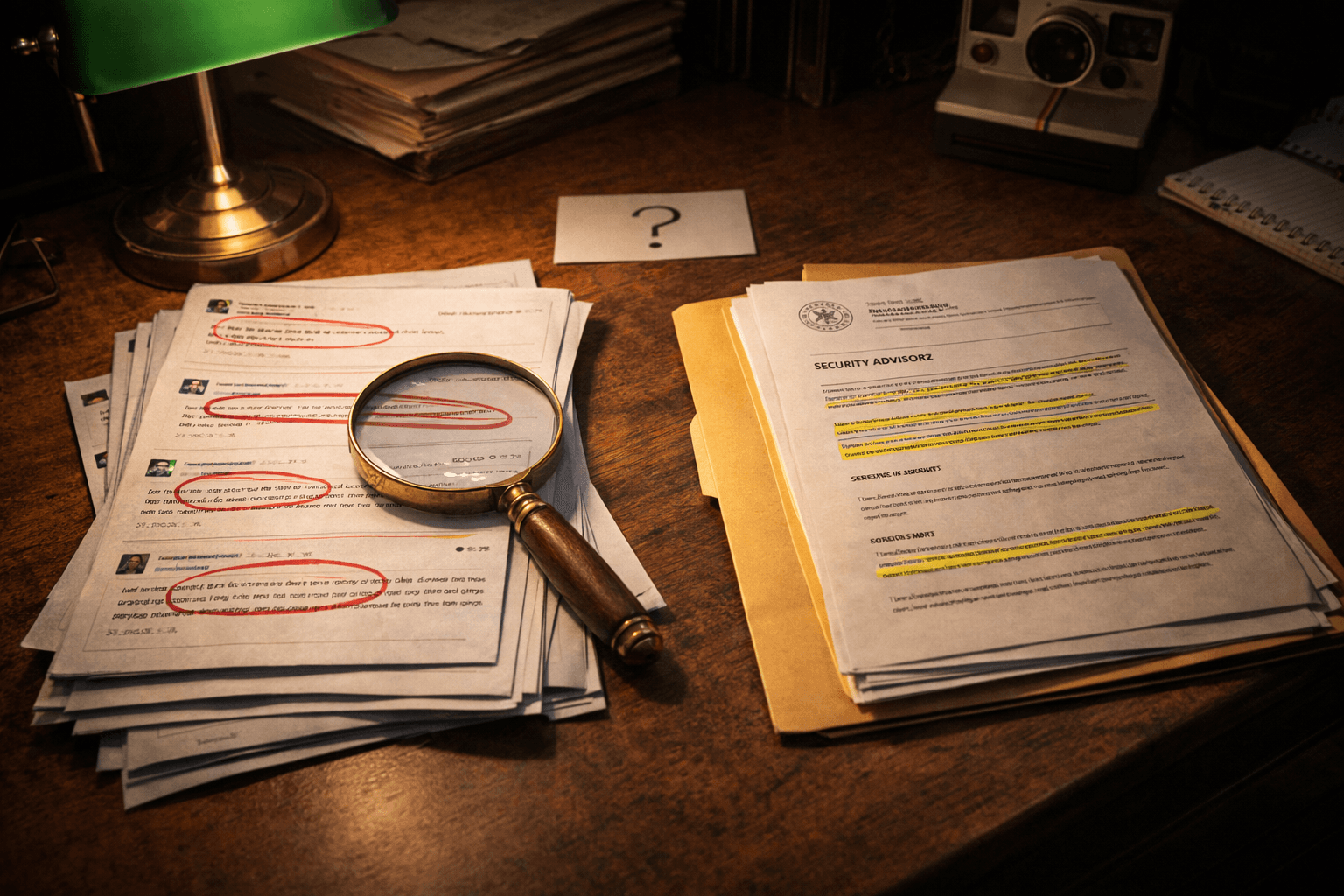

Two Insiders Sounded the Alarm About a Popular AI Library. The Security Record Tells a Different Story.

Andrej Karpathy and Aakash Gupta separately raised the alarm that a 'pip install litellm' was exfiltrating credentials at scale. The official security advisories for the package don't describe that attack. We dug into what's actually documented — and what every business should learn from the gap.

In late March, two well-known voices in the AI world raised the alarm about a popular Python library called litellm. Andrej Karpathy — the former Tesla AI director, OpenAI co-founder, and one of the most-followed technical voices in the field — posted what he called "software horror." His claim: that running pip install litellm enabled the exfiltration of SSH keys, AWS, GCP, and Azure credentials, Kubernetes configs, git credentials, environment variables, shell history, cryptocurrency wallets, SSL private keys, CI/CD secrets, and database passwords.

A day later, Aakash Gupta — a product strategy commentator with a large enterprise audience — raised the same alarm in different words: "Someone just poisoned the Python package that manages AI API keys for NASA, Netflix, Stripe, and NVIDIA. 97 million downloads a month, and a simple pip install was enough to steal everything on your machine."

That sounds catastrophic. So we went looking for the official record.

What's Actually Documented

The litellm project publishes its security advisories on GitHub, the standard place for open-source security disclosures. As of mid-April, the three published advisories for the litellm package in the relevant window are:

-

GHSA-jjhc-v7c2-5hh6 (April 3, 2026, Critical) — Authentication bypass via OIDC userinfo cache key collision. Assigned CVE-2026-35030. The vulnerability is a flaw in how litellm caches authentication tokens when JWT/OIDC authentication is enabled. The system used the first 20 characters of a JWT as a cache key. Because tokens from the same signing algorithm share those characters, an attacker could craft a malicious token that matched a legitimate user's cache entry and assume their identity. Affected versions: all before 1.83.0. Patched: 1.83.0. Notably: this vulnerability requires

enable_jwt_auth: true, which is not the default configuration. Most deployments are unaffected. -

GHSA-53mr-6c8q-9789 (April 3, 2026, High) — Privilege escalation via unrestricted proxy configuration endpoint.

-

GHSA-69x8-hrgq-fjj8 (April 4, 2026, High) — Password hash exposure and pass-the-hash authentication bypass.

All three are vulnerabilities in litellm's proxy server code. None of them describe a supply-chain compromise of the package itself on PyPI. None describe credential exfiltration as part of installation. The patched OIDC bug requires a non-default configuration to even be exploitable.

What This Doesn't Mean

This isn't proof that Karpathy and Gupta were wrong. There are several plausible explanations:

- A different package with a similar name (a "typosquat") may have been temporarily published on PyPI before being taken down. This is a known attack pattern. If that happened, the GitHub advisory for the legitimate

litellmpackage wouldn't reflect it. - A specific version of litellm may have had a malicious dependency for a brief window before being yanked. PyPI does support version yanking, but the official record we see is whatever the project chose to disclose.

- The story may have been based on a misunderstanding of one of the proxy CVEs that got amplified in the retelling.

What we can say with confidence is: as of writing, the documented vulnerabilities for litellm don't describe what the tweets describe. That's a meaningful gap.

Why You Should Care About the Gap, Not Just the Story

Here's the thing. The exact details of the litellm situation matter less than the underlying lesson, which is one we've covered before in different forms: your AI vendor's dependencies are now your attack surface.

The reason both versions of the story land hard is that they could easily be true. The named claim — that a Python package whose specific job is managing API keys for major companies could be compromised in a way that ships secrets out — is technically plausible. The supply-chain attack pattern is well-documented. The PyPI ecosystem has had real, confirmed cases of this exact attack on other packages. There is no technical reason litellm couldn't have been a target.

So the question for any business owner reading this isn't "is the litellm story true." The question is "if this story were about a tool you use, would you know?"

For most businesses, the honest answer is no.

Three Patterns Every Business Should Adopt

The litellm situation — whether the catastrophic version is real, partially real, or overblown — surfaces three habits that protect against the underlying class of risk.

1. Pin your dependencies. When a tool you use updates a dependency, do you know? For Python projects, this means using a requirements.txt with version pins, not floating versions. For Node projects, this means using package-lock.json. For SaaS vendors, this means asking your vendors what their own pinning practice looks like. The default for most ecosystems is "always pull the latest," and the latest is exactly where supply-chain attacks ship.

2. Quarantine credentials. Any AI-related service running in your business should have its own credentials, scoped to only what it needs, with a documented procedure for rotating them. The reason the Karpathy/Gupta story landed so hard is that the named victims were companies whose credentials were apparently sitting on the same machines as their developer tools. The fix isn't to never use developer tools — it's to never put credentials where developer tools can read them.

3. Watch your vendors' security disclosures. GitHub Security Advisories are public. Every major open-source AI tool publishes them. Subscribe to the ones for the libraries you depend on. Your vendors' vendors are publishing too — you might not see them directly, but the vendors you've chosen to trust should be watching them on your behalf, and you should know they are.

The Story That Was Actually Underneath

The litellm episode is a good case study in how AI security stories propagate. A respected technical voice raised a serious concern. A second respected voice amplified it. The story became part of the conversation. The official record lagged behind, then turned out to describe something different.

This pattern is going to repeat. AI tools are moving fast, the security disclosure infrastructure is still maturing, and the gap between "someone tweeted alarm" and "the formal advisory is published" can be days to weeks. During that gap, every business decision based on the story is being made on partial information.

The right posture isn't to ignore the alarm. It's to:

- Take the alarm seriously while you investigate.

- Check the official record before changing your behavior.

- Note the gap between them, because the gap teaches you something about the source.

For litellm specifically: if you're not running litellm with enable_jwt_auth: true in production, your exposure to the documented CVEs is essentially zero. If you are, upgrade to 1.83.0 today. If you're using litellm because a vendor of yours uses it, ask your vendor what version they're on.

The deeper question — what tools are in your stack that could be compromised — is the one most businesses still don't have an answer to. The Karpathy and Gupta posts were a useful reminder that the answer matters.

Blue Octopus Technology helps small businesses build a clear picture of what's actually in their AI stack — including the dependencies their vendors carry. If you've been deploying AI tools without a clear vendor-risk picture, let's talk.

Stay Connected

Get practical insights on using AI and automation to grow your business. No fluff.