30 FPS at 13 Watts: The Power Budget That Actually Matters for Edge AI

Edge AI specs always lead with TOPS. They almost never lead with watts. But on a battery, on a vehicle, in a fanless enclosure — power is the constraint that determines whether your real-time pipeline survives a 20-minute run, not raw compute.

Read any edge AI product page and you'll see TOPS. NVIDIA Jetson Orin AGX: 275 TOPS. Coral USB Accelerator: 4 TOPS. NVIDIA Thor (announced for production): 2000 TOPS. The number is large, the marketing leans hard on it, and it tells you almost nothing about whether the chip can do what you need.

The number that tells you whether the chip can do what you need is watts. Not the manufacturer's marketing "up to" wattage. Not the TDP under stress. The actual sustained wall-power draw at the workload you intend to run, measured at the wall plug or the battery rail.

Here's why that matters more than TOPS, and how to think about it.

A real measurement

A YOLO26n detector + BoT-SORT-noreid tracker + JSONL output running at 30 FPS on a Jetson Orin AGX 64GB at MODE_30W draws about 13 watts at the wall plug. The board is rated up to 60W and has a configurable power profile that maxes at MODE_60W. The vendor spec sheet shows 275 TOPS. None of those three numbers — 60W, 30W, 275 TOPS — predicted the 13W operational draw.

The 13W comes from:

- The detector running at 7ms per frame on a TensorRT FP16 engine (a small fraction of the GPU's available throughput)

- The tracker running at 16ms per frame on a single CPU core

- Idle utilization on the other CPU cores, the second GPU partition, and the DLA accelerator

- Background system overhead (the OS, the camera DMA, the network stack)

At 13W sustained, this board can run on a 100Wh battery for about 7.5 hours. At 30W (the configured profile maximum, if the workload happened to fill it), the same battery lasts 3 hours. At 60W (the chip's full TDP, if you forced a heavier model into the same envelope), it lasts 1.5 hours.

The difference between 7.5 hours and 1.5 hours of mission endurance isn't a software optimization detail. It's the entire viability question.

Why TOPS doesn't tell you this

TOPS is a peak throughput number. It says: "if you fed this chip a workload that perfectly used every multiply-accumulate unit at every clock cycle with no memory stalls, this is the upper bound." Real workloads never look like that.

What actually determines power draw on a real workload:

The fraction of TOPS you actually use. A YOLO26n at 640×640 uses maybe 5-10% of the Jetson Orin's available TOPS at 30 FPS. The other 90% is idle silicon. Idle silicon draws power, but a lot less than active silicon. The closer you push toward the TOPS ceiling, the steeper the power curve gets.

Memory bandwidth. Modern ML models are usually memory-bound, not compute-bound. The GPU is waiting for activations to arrive from HBM or LPDDR more than it's running multiply-accumulates. Memory transactions cost power. A model that doesn't fit in cache will spend most of its energy moving data, not computing on it.

The power profile configuration. Jetson boards have configurable modes — MODE_15W, MODE_30W, MODE_60W on the Orin AGX. The mode caps the clock frequencies. A higher mode lets the GPU run faster, but at a quadratic power cost: doubling the clock for the same workload typically costs 3-4× the power. Most real-time vision pipelines benefit from running at a lower power mode at near-saturation, not at a higher power mode with most of the silicon idle.

Thermal headroom. A board that can hit 60W in a bench test may sustain only 40W in a fanless enclosure at 35°C ambient. Thermal throttling kicks in, clocks drop, and your operating point shifts. The number you can sustain for 20 minutes isn't the number you can sustain for one second.

None of those factors show up in TOPS. They all show up at the wall plug.

The mission-budget framing

The right way to think about edge AI power isn't "how much TOPS do I have." It's "what's my mission power budget, and what real-time pipeline fits inside it."

The mission constrains the budget:

- Aviation / drone payload: 50-150W typical on a small UAS. Higher capacity drones push 200W+. The compute is sharing the budget with motors, radios, sensors, and a small reserve for safety margin.

- Vehicle integration: 200-500W typical for an aftermarket compute payload. Power is plentiful but heat dissipation matters.

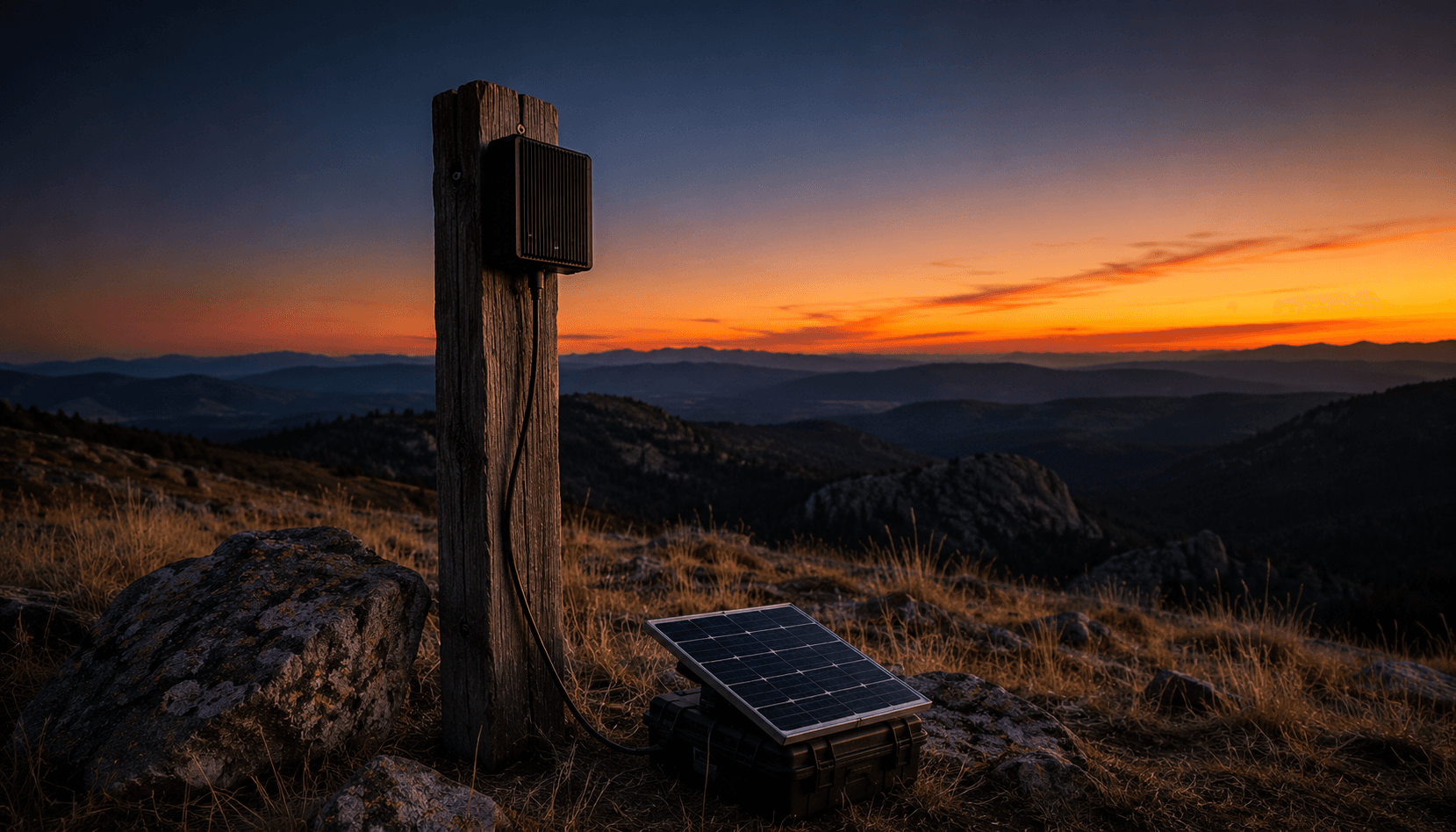

- Field-deployed sensor: 5-20W when running off a small battery or a solar-charged buffer. Power is scarce; the whole pipeline has to fit in single digits.

- Tethered installation (rack, building, vehicle with grid power): 100W+ available. Power isn't the constraint.

Once you know the budget, you back-solve the pipeline. A 13W detector pipeline at 30 FPS is acceptable on a 50W drone payload (leaving 37W for everything else). A 60W pipeline is not. A pipeline that pulses to 40W during heavy frames and drops to 8W during idle frames may be acceptable if the rest of the system can absorb the variance.

The framing flips the question from "how much compute can I cram into this device" to "how much of my power budget am I willing to spend on compute versus everything else." That's a different question. It produces different answers.

The optimization order

Once power is the constraint, optimization priorities reshuffle. The default ML engineering instinct — "make the model bigger and more accurate" — runs straight into the power wall. You don't get to make the model bigger if the power budget says no.

The order that works in practice:

-

Quantize what you have. FP16 cuts power on most workloads by 30-50% versus FP32. INT8 cuts another 20-30% versus FP16 if your model tolerates the accuracy loss. TensorRT, ONNX Runtime, OpenVINO all support this. Quantization is the highest-leverage power optimization available.

-

Lower the power mode. If your workload doesn't need the full clock speed, configuring the board to a lower power mode is free. A YOLO26n that takes 7ms at MODE_60W might take 10ms at MODE_30W — but it draws half the power. If 10ms still meets your frame budget, take the win.

-

Right-size the model. YOLO26n at 640×640 versus YOLO26s at 1280×1280 is a 10× difference in operational power. Pick the smallest model that hits your accuracy floor.

-

Optimize the non-detector stages. Once the detector is small, the tracker and post-processing become a larger share of total power. A C++ tracker beats a Python tracker on power-per-frame; a single-threaded loop beats a multi-threaded one if the work is already small.

-

Then consider hardware upgrades. A bigger board (more TOPS, more memory) is the last resort, not the first. It always raises the power floor.

What this means for procurement

When sizing an edge AI box for a real deployment, the questions to ask the vendor aren't about TOPS. They're:

- What's the sustained power draw at the configured operating point, measured at the wall? Vendors should be able to answer this for representative workloads. If they can only quote TDP, treat that as a red flag.

- What's the thermal profile in the target enclosure at the target ambient temperature? A fanless box at 40°C is not the same as a bench unit at 22°C with active cooling.

- What's the power floor when idle? Some boards draw 5W idle, some draw 15W idle. On a mission with intermittent compute load, the idle floor often dominates the budget.

- Does the power scale linearly with the workload, or is there a discontinuity? Some boards have a low-power state and a normal-power state with no smooth transition. If your workload straddles the transition, you'll get worse mileage than the per-state numbers predict.

The honest answer to all four questions is "we measured it on representative workloads and here's the data." The dishonest answer is "up to X TOPS."

The compounding effect

Power efficiency compounds. A pipeline that runs at 13W instead of 30W:

- Doubles or triples mission endurance on a battery

- Eliminates active cooling requirements (most fans turn on around 25-30W in compact enclosures)

- Reduces thermal stress on neighboring components — which extends MTBF

- Cuts the operating cost of grid-tethered installations by 60% over a multi-year deployment

- Opens deployment scenarios that 30W pipelines simply can't reach (small drones, solar-powered sensors, vehicle aux-power outlets)

These aren't marginal benefits. They're the difference between a pipeline that ships and a pipeline that doesn't.

The TOPS trap

If you remember one thing: TOPS is a marketing number, not an engineering number. It tells you what the silicon could theoretically do under perfect conditions. It doesn't tell you what your workload will actually cost in watts, what your enclosure can dissipate, what your battery can sustain, or what your mission needs.

Watts at the wall, measured under your actual workload, in your actual enclosure, at your actual ambient — that's the engineering number. Get that measurement before you commit to a board. Most edge AI deployment failures we've seen trace back to skipping this measurement and trusting the spec sheet.

Related reading:

- The Four-Tracker Spectrum: Picking the Right Multi-Object Tracker for Edge Vision — tracker choice as a power-budget decision

- Why Your TensorRT FP16 Speedup Looks Smaller Than Promised — quantization is the biggest single power lever

- The AI Junior on a Box: What We Actually Sell — the box is sized around power-per-task, not TOPS

Stay Connected

Get practical insights on using AI and automation to grow your business. No fluff.